Running Kubernetes in a production environment is an increasingly popular way to ensure scalability and increase the availability of web applications. This article argues in favor of Kubernetes in another critical phase of the software development life cycle – the testing phase.

Many developers may hesitate to get on board with this idea because they are inexperienced in administrating Kubernetes. However, if carefully planned out, implementing this container orchestration platform in testing provides a realistic simulation of the production environment without much-added effort.

Kubernetes-Based Testing

Organizations that want to integrate Kubernetes into their workflow should be aware that it has a steep learning curve and needs experienced DevOps or SysAdmins to run it. However, it quickly pays off in added flexibility, efficiency, and velocity with which developers can deploy new app versions.

Kubernetes test environments can be created locally using Minikube, k3s, or MicroK8s. This approach is usually adequate for resource-light applications that do not require much in terms of supporting infrastructure. However, while setting up local sandboxes is easy, it also comes with three important drawbacks:

- Developers are forced to become small-scale Kubernetes administrators. While learning how to deploy apps and maintain a cluster is not an insurmountable obstacle, cluster administration creates added tasks and interrupts the development workflow.

- Resource hungry applications put a strain on local clusters. A single machine offers limited resources, resulting in a bad testing experience.

- Test deployment in a local cluster does not perfectly replicate the production environment. Given that the primary purpose of sandboxing is to create a replica of the production conditions, local sandboxes have limited utility.

Bare Metal Cloud S.0 server instances provide a cost-effective dedicated server solution for development projects starting at $0.10/h or $67.00/m.

Follow our guide to spin up a sandbox environment on BMC in just a couple of minutes.

The Best Kubernetes Experience Is in the Cloud

The key features of Kubernetes that make it a smart choice for testing environments are fault-tolerance and auto-healing. These features facilitate resource scaling and work best in cloud-native infrastructure environments that can scale resources on-demand.

All three problems mentioned above are solved by creating a dedicated cluster for testing purposes using the cloud-native infrastructure. Cloud provides much-needed flexibility for setting up efficient sandbox environments. It also takes the burden off developers - experienced administrators who are already employed on the production level can also maintain shared test clusters.

Automated infrastructure in cloud-native environments dramatically facilitates the testing of resource-hungry applications. The benefits of cloud-based automatization are:

- Ability to scale horizontally and reduce costs by paying only for what you use.

- Standardization of the application definition and deployment.

- Compatibility with DevOps pipelines.

- Proper geo-distribution of instances helps overcome latency problems if teams are far away from each other.

While, in theory, any IaaS could be used for this purpose, bare metal solutions tend to perform best. Using this platform as the foundation for automated infrastructure ensures dedicated resources and no virtualization overhead.

phoenixNAP’s Bare Metal Cloud is one example of a dedicated platform that can provide Kubernetes clusters on-demand with direct access to CPU and RAM resources for accelerated performance. Unlike traditional bare metal servers, Bare Metal Cloud is delivered on a cloud-like model and deployed in minutes. It also has its API and CLI along with support for a number of infrastructure-as-code integrations, making it possible for developers to manage it using familiar tools.

An efficient sandbox also needs to run on flexible infrastructure. This flexibility is best achieved by using Bare Metal Cloud servers built with components such as 3rd Gen Intel® Xeon® Scalable Processors that can be optimized for many different workload types.

Streamline Deployments with Rancher

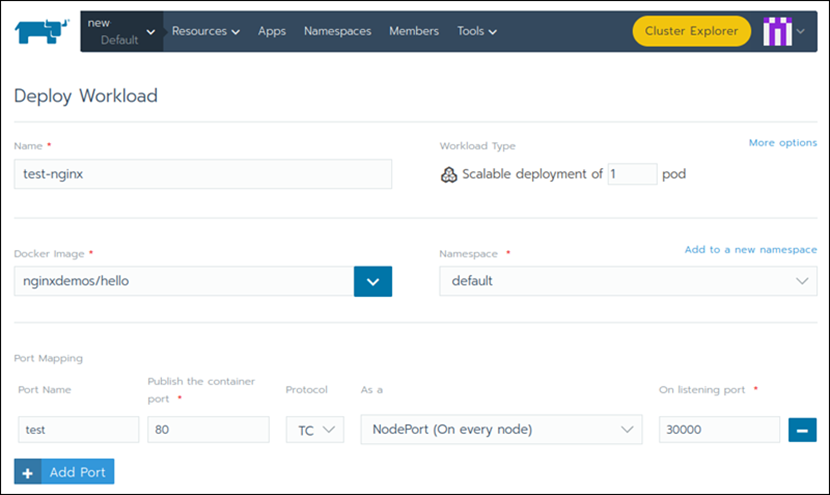

A popular way to streamline cluster deployment is to employ Rancher to manage the Kubernetes test cluster. Rancher deployments act as an easy-to-use but robust web UI and proxy for Kubernetes. It is entirely open-source and supports all certified K8s distributions. For on-premise deployment, it uses RKE (Rancher Kubernetes Engine); on the cloud, it supports GKE, EKS, and AKS, as well as K3S for edge workloads.

Rancher offers a comprehensive platform for workload deployment, secret management, load balancing, and cluster health monitoring. It also features built-in IaC tools for provisioning servers that greatly improve and streamline the testing experience.

Deploying an app in Rancher is performed using a clean and straightforward interface. After the cluster is registered in Rancher, the Deploy Workload page allows users to create quick, scalable deployments.

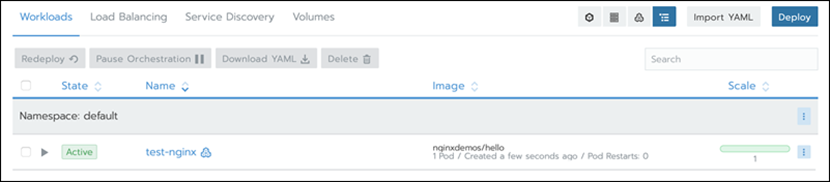

Users can find all the created workloads on the Workloads page. Here they can view or change deployment rules related to upgrading, scheduling, and scaling of pods.

Rancher provides centralized authentication, observability, and access control for operators. It lets developers and QA testers focus on writing code and testing, since their interaction with Kubernetes boils down to a couple of clicks.

For teams just looking to start using Rancher and Kubernetes, the easiest way to get them up and running is through cloud platform integrations. phoenixNAP’s Bare Metal Cloud includes multiple instances with pre-installed Rancher software, eliminating the need to build the integration from scratch.

Conclusion

Using Kubernetes as the go-to option for application testing helps you come closer to emulating a full production environment, making QA easier and more efficient.

While Kubernetes can be set up locally, its best features come into focus when it is deployed using cloud infrastructure. Tools such as Rancher and cloud-native environments such as Bare Metal Cloud help streamline the Kubernetes deployment and make it easier for developers and QA testers to focus on their work.

Bare Metal Cloud

Cutting-Edge Compute Technology • 50 Gbps Network • Pay-Per-Use

FAST. SCALABLE. RELIABLE.