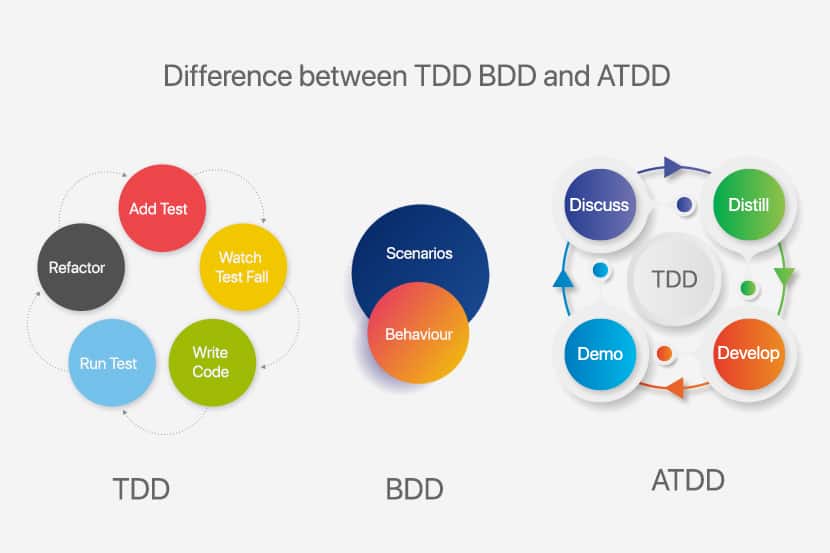

Test-driven development (TDD) and Behavior-driven development (BDD) are both test-first approaches to Software Development. They share common concepts and paradigms, rooted in the same philosophies. In this article, we will highlight the commonalities, differences, pros, and cons of both approaches.

What is Test-driven development (TDD)

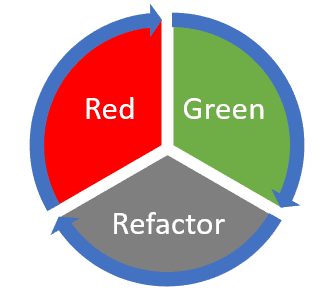

Test-driven development (TDD) is a software development process that relies on the repetition of a short development cycle: requirements turn into very specific test cases. The code is written to make the test pass. Finally, the code is refactored and improved to ensure code quality and eliminate any technical debt. This cycle is well-known as the Red-Green-Refactor cycle.

What is Behavior-driven development (BDD)

Behavior-driven development (BDD) is a software development process that encourages collaboration among all parties involved in a project’s delivery. It encourages the definition and formalization of a system’s behavior in a common language understood by all parties and uses this definition as the seed for a TDD based process.

Key Differences Between TDD and BDD

| TDD | BDD | |

| Focus | Delivery of a functional feature | Delivering on expected system behavior |

| Approach | Bottom-up or Top-down (Acceptance-Test-Driven Development) | Top-down |

| Starting Point | A test case | A user story/scenario |

| Participants | Technical Team | All Team Members including Client |

| Language | Programming Language | Lingua Franca |

| Process | Lean, Iterative | Lean, Iterative |

| Delivers | A functioning system that meets our test criteria | A system that behaves as expected and a test suite that describes the system’s behavior in human common-language |

| Avoids | Over-engineering, low test coverage, and low-value tests | Deviation from intended system behavior |

| Brittleness | Change in implementation can result in changes to test suite | Test suite-only needs to change if the system behavior is required to change |

| Difficulty of Implementation | Relatively simple for Bottom-up, more difficult for Top-down | The bigger learning curve for all parties involved |

Test-Driven Development (TDD)

In TDD, we have the well-known Red-Green-Refactor cycle. We start with a failing test (red) and implement as little code as necessary to make it pass (green). This process is also known as Test-First Development. TDD also adds a Refactor stage, which is equally important to overall success.

The TDD approach was discovered (or perhaps rediscovered) by Kent Beck, one of the pioneers of Unit Testing and later TDD, Agile Software Development, and eventually Extreme Programming.

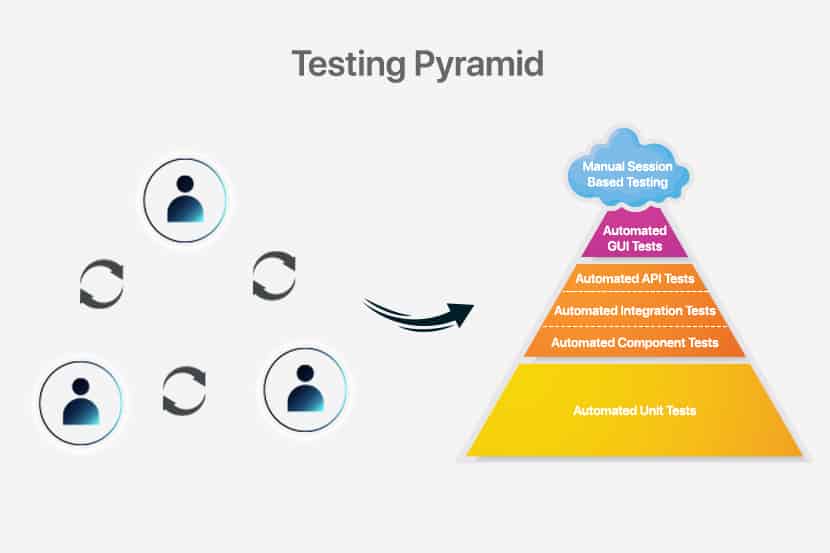

The diagram below does an excellent job of giving an easily digestible overview of the process. However, the beauty is in the details. Before delving into each individual stage, we must also discuss two high-level approaches towards TDD, namely bottom-up and top-down TDD.

Bottom-Up TDD

The idea behind Bottom-Up TDD, also known as Inside-Out TDD, is to build functionality iteratively, focusing on one entity at a time, solidifying its behavior before moving on to other entities and other layers.

We start by writing Unit-level tests, proceeding with their implementation, and then moving on to writing higher-level tests that aggregate the functionalities of lower-level tests, create an implementation of the said aggregate test, and so on. By building up, layer by layer, we will eventually get to a stage where the aggregate test is an acceptance level test, one that hopefully falls in line with the requested functionality. This process makes this a highly developer-centric approach mainly intended at making the developer’s life easier.

| Pros | Cons |

| Focus is on one functional entity at a time | Delays integration stage |

| Functional entities are easy to identify | Amount of behavior an entity needs to expose is unclear |

| High-level vision not required to start | High risk of entities not interacting correctly with each other thus requiring refactors |

| Helps parallelization | Business logic possibly spread across multiple entities making it unclear and difficult to test |

Top-Down TDD

Top-Down TDD is also known as Outside-In TDD or Acceptance-Test-Driven Development (ATDD). It takes the opposite approach. Wherein we start building a system, iteratively adding more detail to the implementation. And iteratively breaking it down into smaller entities as refactoring opportunities become evident.

We start by writing an acceptance-level test, proceed with minimal implementation. This test also needs to be done incrementally. Thus, before creating any new entity or method, it needs to be preceded by a test at the appropriate level. We are hence iteratively refining the solution until it solves the problem that kicked off the whole exercise, that is, the acceptance-test.

This setup makes Top-Down TDD a more Business/Customer-centric approach. This approach is more challenging to get right as it relies heavily on good communication between the customer and the team. It also requires good citizenship from the developer as the next iterative step needs to come under careful consideration. This process will speed-up in time but does have a learning curve. However, the benefits far outweigh any negatives. This approach results in the collaboration between customer and team taking center stage, a system with very well-defined behavior, clearly defined flows, focus on integrating first, and a very predictable workflow and outcome.

| Pros | Cons |

| Focus is on one user requested scenario at a time | Critical to get the Assertion-Test right thus requiring collaborative discussion between business/user/customer and team |

| Flow is easy to identify | Relies on Stubbing, Mocking and/or Test Doubles |

| Focus is on integration rather than implementation details | Slower start as the flow is identified through multiple iterations |

| Amount of behavior an entity needs to expose is clear | More limited parallelization opportunities until a skeleton system starts to emerge |

| User Requirements, System Design and Implementation details are all clearly reflected in the test suite | |

| Predictable |

The Red-Green-Refactor Life Cycle

Armed with the above-discussed high-level vision of how we can approach TDD, we are free to delve deeper into the three core stages of the Red-Green-Refactor flow.

Red

We start by writing a single test, execute it (thus having it fail) and only then move to the implementation of that test. Writing the correct test is crucial here, as is agreeing on the layer of testing that we are trying to achieve. Will this be an acceptance level test or a unit level test? This choice is the chief delineation between bottom-up and top-down TDD.

Green

During the Green-stage, we must create an implementation to make the test defined in the Red stage pass. The implementation should be the most minimal implementation possible, making the test pass and nothing more. Run the test and watch it pass.

Creating the most minimal implementation possible is often the challenge here as a developer may be inclined, through force of habit, to embellish the implementation right off the bat. This result is undesirable as it will create technical baggage that, over time, will make refactoring more expensive and potentially skew the system based on refactoring cost. By keeping each implementation step as small as possible, we further highlight the iterative nature of the process we are trying to implement. This feature is what will grant us agility.

Another key aspect is that the Red-stage, i.e., the tests, is what drives the Green-stage. There should be no implementation that is not driven by a very specific test. If we are following a bottom-up approach, this pretty much comes naturally. However, if we’re adopting a top-down approach, then we must be a bit more conscientious and make sure to create further tests as the implementation takes shape, thus moving from acceptance level tests to unit-level tests.

Refactor

The Refactor-stage is the third pillar of TDD. Here the objective is to revisit and improve on the implementation. The implementation is optimized, code quality is improved, and redundancy eliminated.

Refactoring can have a negative connotation for many, being perceived as a pure cost, fixing something improperly done the first time around. This perception originates in more traditional workflows where refactoring is primarily done only when necessary, typically when the amount of technical baggage reaches untenable levels, thus resulting in a lengthy, expensive, refactoring effort.

Here, however, refactoring is an intrinsic part of the workflow and is performed iteratively. This flexibility dramatically reduces the cost of refactoring. The code is not entirely reworked. Instead, it is slowly evolving. Moreover, the refactored code is, by definition, covered by a test. A test that has already passed in a previous iteration of the code. Thus, refactoring can be done with confidence, resulting in further speed-up. Moreover, this iterative approach to improvement of the codebase allows for emergent design, which drastically reduces the risk of over-engineering the problem.

It is of critical importance that behavior should not change, and we do not add extra functionality during the Refactor-stage. This process allows refactoring to be done with extreme confidence and agility as the relevant code is, by definition, already covered by a test.

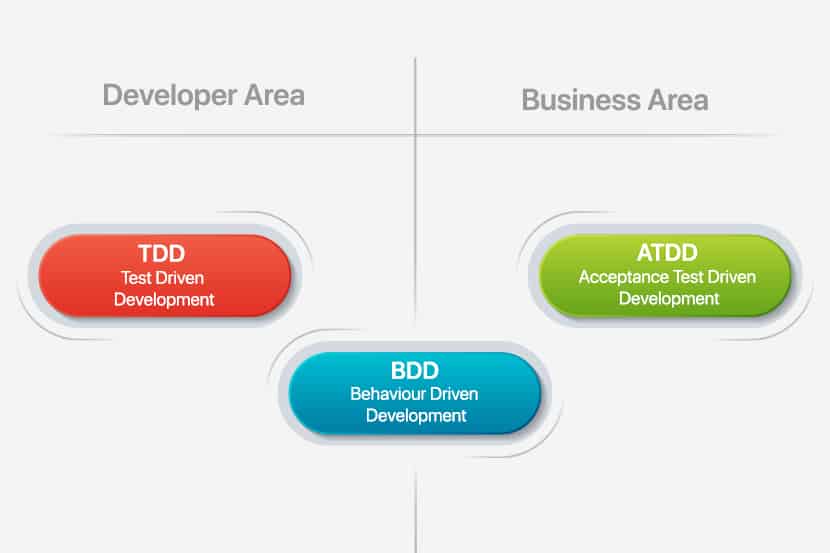

Behavior-Driven Development (BDD)

As previously discussed, TDD (or bottom-up TDD) is a developer-centric approach aimed at producing a better code-base and a better test suite. In contrast, ATDD is more Customer-centric and aimed at producing a better solution overall. We can consider Behavior-Driven Development as the next logical progression from ATDD. Dan North’s experiences with TDD and ATDD resulted in his proposing the BDD concept, whose idea and the claim was to bring together the best aspects of TDD and ATDD while eliminating the pain-points he identified in the two approaches. What he identified was that it was helpful to have descriptive test names and that testing behavior was much more valuable than functional testing.

Dan North does a great job of succinctly describing BDD as “Using examples at multiple levels to create shared understanding and surface certainty to deliver software that matters.”

Some key points here:

- What we care about is the system’s behavior

- It is much more valuable to test behavior than to test the specific functional implementation details

- Use a common language/notation to develop a shared understanding of the expected and existing behavior across domain experts, developers, testers, stakeholders, etc.

- We achieve Surface Certainty when everyone can understand the behavior of the system, what has already been implemented and what is being implemented and the system is guaranteed to satisfy the described behaviors

BDD puts the onus even more on the fruitful collaboration between the customer and the team. It becomes even more critical to define the system’s behavior correctly, thus resulting in the correct behavioral tests. A common pitfall here is to make assumptions about how the system will go about implementing a behavior. This mistake occurs in a test that is tainted with implementation detail, thus making it a functional test and not a real behavioral test. This error is something we want to avoid.

The value of a behavioral test is that it tests the system. It does not care about how it achieves the results. This setup means that a behavioral test should not change over time. Not unless the behavior itself needs to change as part of a feature request. The cost-benefit over functional testing is more significant as such tests are often so tightly coupled with the implementation that a refactor of the code involves a refactor of the test as well.

However, the more substantial benefit is the retention of Surface Certainty. In a functional test, a code-refactor may also require a test-refactor, inevitably resulting in a loss of confidence. Should the test fail, we are not sure what the cause might be: the code, the test, or both. Even if the test passes, we cannot be confident that the previous behavior has been retained. All we know is that the test matches the implementation. This result is of low value because, ultimately, what the customer cares about is the behavior of the system. Thus, it is the behavior of the system that we need to test and guarantee.

A BDD based approach should result in full test coverage where the behavioral tests fully describe the system’s behavior to all parties using a common language. Contrast this with functional testing were even having full coverage gives no guarantees as to whether the system satisfies the customer’s needs and the risk and cost of refactoring the test suite itself only increase with more coverage. Of course, leveraging both by working top-down from behavioral tests to more functional tests will give the Surface Certainty benefits of behavioral testing. Plus, the developer-focused benefits of functional testing also curb the cost and risk of functional testing since they’re only used where appropriate.

In comparing TDD and BDD directly, the main changes are that:

- The decision of what to test is simplified; we need to test the behavior

- We leverage a common language which short-circuits another layer of communication and streamlines the effort; the user stories as defined by the stakeholders are the test cases

An ecosystem of frameworks and tools emerged to allow for common-language based collaboration across teams. As well as the integration and execution of such behavior as tests by leveraging industry-standard tooling. Examples of this include Cucumber, JBehave, and Fitnesse, to name a few.

The Right Tool for the Job

As we have seen, TDD and BDD are not really in direct competition with each other. Consider BDD as a further evolution of TDD and ATDD, which brings more of a Customer-focus and further emphasizes communication between the customer and the Technical team at all stages of the process. The result of this is a system that behaves as expected by all parties involved, together with a test suite describing the entirety of the system’s many behaviors in a human-readable fashion that everyone has access to and can easily understand. This system, in turn, provides a very high level of confidence in not only the implemented system but in future changes, refactors, and maintenance of the system.

At the same time, BDD is based heavily on the TDD process, with a few key changes. While the customer or particular members of the team may primarily be involved with the top-most level of the system, other team members like developers and QA engineers would organically shift from a BDD to a TDD model as they work their way in a top-down fashion.

We expect the following key benefits:

- Bringing pain forward

- Onus on collaboration between customer and team

- A common language shared between customer and team-leading to share understanding

- Imposes a lean, iterative process

- Guarantee the delivery of software that not only works but works as defined

- Avoid over-engineering through emergent design, thus achieving desired results via the most minimal solution possible

- Surface Certainty allows for fast and confident code refactors

- Tests have innate value VS creating tests simply to meet an arbitrary code coverage threshold

- Tests are living documentation that fully describes the behavior of the system

There are also scenarios where BDD might not be a suitable option. There are situations where the system in question is very technical and perhaps is not customer-facing at all. It makes the requirements more tightly bound to the functionality than they are to behavior, making TDD a possibly better fit.

Adopting TDD or BDD?

Ultimately, the question should not be whether to adopt TDD or BDD, but which approach is best for the task at hand. Quite often, the answer to that question will be both. As more people are involved in more significant projects, it will become self-evident that both approaches are needed at different levels and at various times throughout the project’s lifecycle. TDD will give structure and confidence to the technical team. While BDD will facilitate and emphasize communication between all involved parties and ultimately delivers a product that meets the customer’s expectations and offers the Surface Certainty required to ensure confidence in further evolving the product in the future.

As is often the case, there is no magic bullet here. What we have instead is a couple of very valid approaches. Knowledge of both will allow teams to determine the best method based on the needs of the project. Further experience and fluidity of execution will enable the team to use all the tools in its toolbox as the need arises throughout the project’s lifecycle, thus achieving the best possible business outcome. To find out how this applies to your business, talk to one of our experts today.